- Blog

- Curren Y This Ain T No Mixtape Download

- Download Torrent The Color Purple

- How To Download Music On Ipod Touch Without Itunes

- Teen Wolf Season 6 Episode 8 Download Torrent

- Download Game Prince Of Persia 2 For Pc

- Thinkpad T61 Network Controller Driver

- Cara Download Game Catur Di Komputer

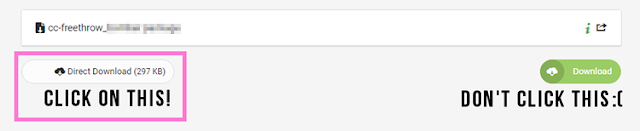

- How To Download From Filescdn

- Audio Hardware For Gta Vice City Free Download

- Download Game Blade And Soul Viet Nam

- Asus Ai Suite Download Windows 10

- Oracle Client And Networking Components Download

- Andy Grammer Good To Be Alive Mp3 Download

- Online English To Bengali Dictionary Free Download

- Auto Glass Software Free Download

- Trump The Art Of The Deal Download

- I Ninja Pc Download Torrent

- Wolf Of Wall Street Full Movie Free Download

- Comedy Night With Kapil Show Download

- You Ve Got A Friend In Me Download

- The Magicians Lev Grossman Pdf Download

- New Ps4 Download Game Saves

- Download Game Lost Saga Jar

- High Heels Te Nachche Mp3 Download

download.py

| # original source: https://www.reddit.com/r/opendirectories/comments/6vysrh/lots_of_italian_books_is_there_any_way_to/dm46nig/ |

| # plus a few tweaks from me. |

| # This is a Python 2.7 script; you will also need Requests and BeautifulSoup. |

| # If you have virtualenv installed: |

| # $> virtualenv env |

| # $> source env/bin/activate |

| # $> pip install requests beautifulsoup |

| # $> python download.py |

| import codecs |

| from time import sleep |

| import requests |

| import sys |

| import os |

| from subprocess import Popen, STDOUT, PIPE |

| from BeautifulSoup import BeautifulSoup |

| import HTMLParser |

| curr_dir = os.path.dirname(os.path.abspath(__file__)) |

| dldir = os.path.join(curr_dir, 'downloads') |

| ifnot os.path.exists(dldir): |

| os.mkdir(dldir) |

| defdown_filescdn(url, backoff=None): |

| link =None |

| if url: |

| # Getting id and rand |

| rand ='hmtr5wcosqa5m55xmlf7ax2xfzl4loqi2m6rrry' |

| id= url[-12:] |

| myheaders = {'Pragma':'no-cache', |

| 'Origin': 'https://filescdn.com', |

| 'Accept-Encoding': 'gzip, deflate, br', |

| 'Accept-Language' : 'en-US,en;q=0.8,et;q=0.6,it;q=0.4,nb;q=0.2', |

| 'Upgrade-Insecure-Requests':'1', |

| 'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_6) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.82 Safari/537.36', |

| 'Accept':'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,*/*;q=0.8', |

| 'Cache-Control':'no-cache', |

| 'Referer':'https://filescdn.com/fe9qupy2n90u' , |

| 'DNT':'1', |

| 'Connection':'keep-alive'} |

| mycookies = {'t_pop':'1', 'lang': 'english'} |

| datadict = {'op':'download2','id':id, 'rand' : rand, 'referer': '', 'method_free':'', 'method_premium':''} |

| url ='https://filescdn.com/'+id |

| with requests.post(url, data=datadict, cookies=mycookies, headers=myheaders) as resp: |

| with codecs.open(os.path.join(curr_dir, 'tmp.txt'), 'w', 'utf-8') as tmpf: |

| tmpf.write(resp.text) |

| with codecs.open('tmp.txt', 'r', 'utf-8') as f: |

| html = f.read() |

| soup = BeautifulSoup(html) |

| try: |

| name = soup.findAll('h6')[0].text |

| name = HTMLParser.HTMLParser().unescape(name) |

| except: |

| if'You have to wait 'in html: |

| if backoff: |

| backoff = backoff + backoff |

| else: |

| backoff =2 |

| print'!! ERROR, possible throttling, trying again in {} seconds.'.format(str(backoff)) |

| sleep(backoff) |

| down_filescdn(url, backoff) |

| returnFalse |

| links = soup.findAll('a') |

| for l in links: |

| myurl = l.get('href') |

| ifnot myurl: |

| continue |

| if myurl.endswith(('.epub', '.pdf', '.rar', '.mobi', '.zip', '.azw3', '.azw4', '.lit')): |

| link = myurl |

| break |

| print link |

| # download |

| if link isnotNone: |

| response = requests.get(link, stream=True) |

| response.raise_for_status() |

| withopen(os.path.join(dldir,name), 'wb') as handle: |

| for block in response.iter_content(1024): |

| handle.write(block) |

| print'* File Downloaded' |

| else: |

| print'* File Skipped : '+ myurl |

| status_save(name) |

| defbuild_list(root, start, end, interval): |

| # eg https://filescdn.com/f/l1tn8a2wt0xn/317/ |

| mystatus = status_load() |

| print'* Downloading from list' |

| while (start < end): |

| print'* Getting page: '+str(start) |

| html = requests.get(root +'/'+str(start)).content |

| soup = BeautifulSoup(html) |

| divs = soup.findAll('div') |

| for d in divs: |

| ifstr(d.get('class')) 'text-semibold': |

| name = d.findAll('a')[0].text |

| name = HTMLParser.HTMLParser().unescape(name) |

| if mystatus and name[0:100] != mystatus[0:100]: |

| print'- Skipping, already downloaded' |

| continue |

| if mystatus and name[0:100] mystatus[0:100]: |

| print'- Resuming download' |

| mystatus =None |

| continue |

| link ='http:'+str(d.findAll('a')[0].get('href')) |

| print'{}tt{}'.format(link, name.encode('utf-8')) |

| down_filescdn(link) |

| sleep(interval) |

| start +=1 |

| defstatus_save(name): |

| withopen('status.ini', 'w') as f: |

| f.write(name.encode('utf-8')) |

| defstatus_load(): |

| try: |

| withopen('status.ini', 'r') as f: |

| data = f.read().replace('n', '').replace('r', '') |

| print'* Found place for resuming' |

| name = HTMLParser.HTMLParser().unescape(data.decode('utf-8')) |

| return name |

| exceptIOError: |

| returnNone |

| if__name__'__main__': |

| start_page =1 |

| end_page =317 |

| sleep_interval =5 |

| build_list('https://filescdn.com/f/l1tn8a2wt0xn/', start_page, end_page, sleep_interval) |

We would like to show you a description here but the site won’t allow us.

How To Download From Filescdn To Mp3

Sign up for freeto join this conversation on GitHub. Already have an account? Sign in to comment

Similar Content

- By minizx[hide]Phantasy Star Portable 2 .cso (1.36 GB)

Please login or register to see this link. - By MobCatOutRun 2006

Coast 2 coast

AKA

Out Run 2006: Coast to Coast

Tested and Working: TSOP Flashed xbox v1.4

Info:

This game has been MIX region patched by kami knows what (probably DVD2XBOX) so I am unsure of it's original origin (I'm guessing NTSC-U though) but it should work on all original xboxs.

Cover Scans:

Please login or register to see this spoiler. - By MobCatOutRun 2

Out Run 2

Tested and Working: TSOP Flashed xbox v1.4

Info:

This game has been MIX region patched by kami knows what (probably DVD2XBOX) so I am unsure of it's original origin (I'm guessing NTSC-U though) but it should work on all original xboxs.

Cover Scans:

Please login or register to see this spoiler. - By MobCatESPN NHL 2K5

Entertainment and Sports Programming Network National Hockey League 2005

Tested and Working: Mod-chipped Xbox v1.2

The request was for a ISO format of the game, However I only had the folder XBE format so all of my testing was done with that format and then I made a ISO of the folder XBE with Qwix 1.01

The ISO it's self was not tested but the files inside it should be good

Info:

This game has been MIX region patched by kami knows what (probably DVD2XBOX) so I am unsure of it's original origin (I'm guessing NTSC-U though)

Scans:

Please login or register to see this spoiler.

Uploaded per request - By MobCatSega GT Online

Tested and working: Mod-chipped Xbox v1.2

Please Note: The Online stuff was not tested and if you want to get that running your own your own sorry, but all the offline stuff works fine and is a pretty good gran turismo clone

Info:

This game has been MIX region patched by kami knows what (probably DVD2XBOX) so I am unsure of it's original region, but I'm pretty sure it's NTSC-U. It should work on all original xboxs regardless.

Cover Scans:

Please login or register to see this spoiler.

How To Download From Filescdn Laptop

Math Resource Studio 6.1.9.0 Professional Free Download March 5, 2019 TheSage 7.28.2686 – English Dictionary and Thesaurus February 23, 2019 SDL Trados Studio 2019 Professional Free Download February 11, 2019. Is Filescdn safe? Until now download from Filescdn is excellent. Run FileScdn from your Filescdn PC and download will start flawless! Filescdn search. See more of Internet Download Manager Free on Facebook. Create New Account. See more of Internet Download Manager Free on Facebook. Forgot account? Create New Account. Internet Download Manager Free. Community See All. 9,843 people like this. 9,829 people follow this.